Hugin

A native macOS app for talking to language models. Local-first, fast, opinionated about context.

The Name

In Norse mythology, Hugin is one of Odin's two ravens. The name means "thought." He flies out into the world each day and returns with what he's learned.

What It Does

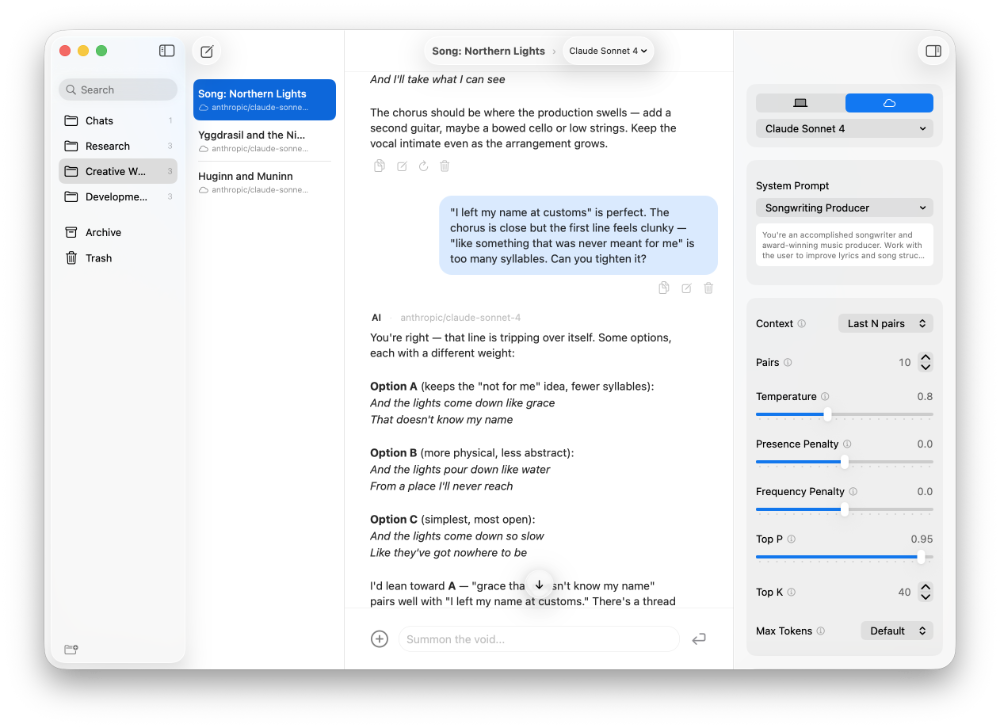

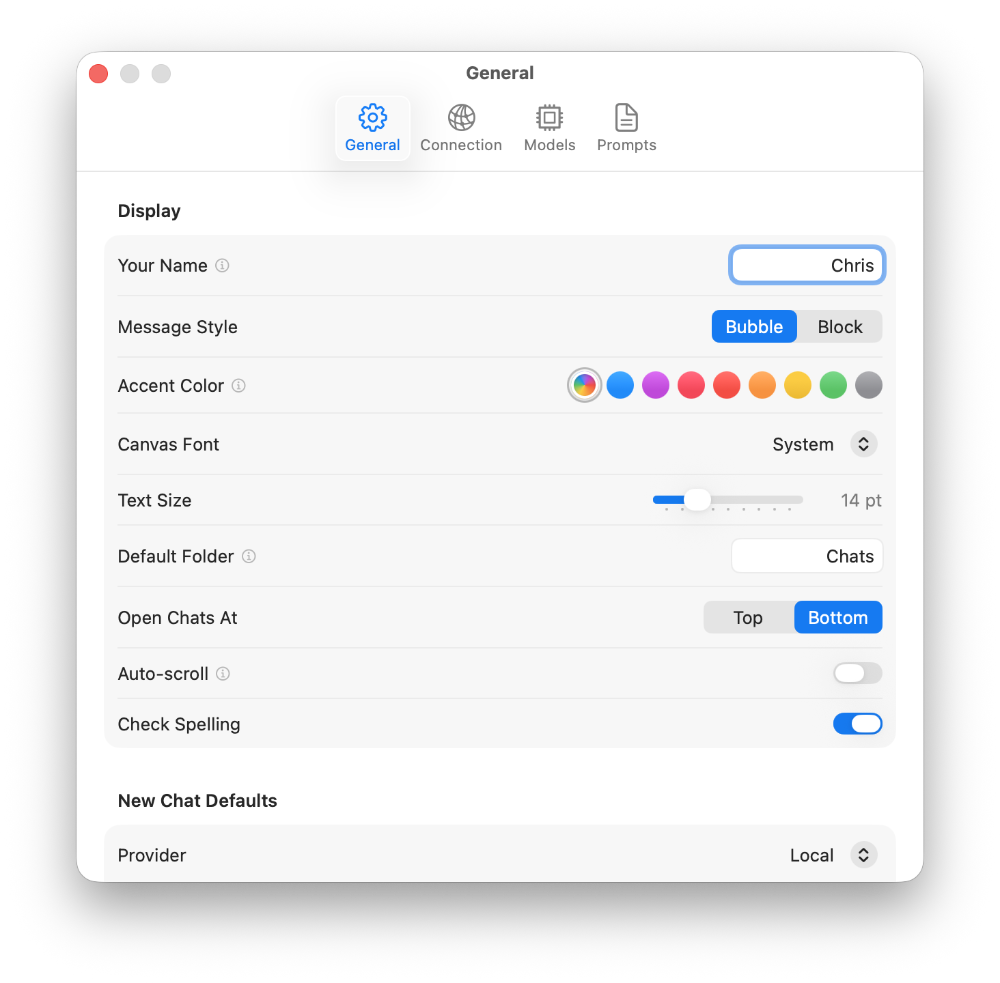

Hugin connects to local models through Ollama and OpenAI-compatible servers, Apple's on-device Foundation Models, and cloud models via OpenRouter. Every chat gets its own provider, model, system prompt, and parameters. Nothing is global unless you want it to be.

Context Control

The interesting problem with local LLMs is context. Send too much and the response crawls. Send too little and the model forgets what you were talking about.

Hugin has four context modes. The one I'm most proud of is Adaptive — it starts with minimal context and grows based on how fast the model responds. It learns the optimal turn count per model over time, no configuration needed. There's also a manual context reset you can drop anywhere in a conversation to tell the model: forget everything above this line.

Thinking Out Loud

For reasoning models, Hugin shows a live "thinking peephole" — a small inset panel that streams the last few lines of the model's internal reasoning as it works. You can watch the model think before it speaks.

The Details

Image attachments with automatic compression. Multi-generation navigation so you can regenerate responses and keep every version. Import from ChatGPT, Claude, and LM Studio. Notes-style folders with archive and trash. Optional Brave web search via OpenRouter tool calling. All built in Swift 6 on SwiftUI and AppKit, targeting macOS 26.

Built with AI

I designed Hugin and wrote every line of its code in collaboration with Claude. The architecture, the UI decisions, the edge cases — all of it is a conversation between a person with an idea and a model that can execute.